Further Study

What Next?¶

So far we have only discussed about Supervised models. Supervised learning involves training a model on a labeled dataset, meaning each input comes with the correct output. The goal is for the model to learn to predict these labels on new, unseen data.

Pros:

High accuracy when enough labeled data is available.

Predictable performance and easier to evaluate.

Well-supported with mature libraries.

Cons:

Requires large, clean labeled datasets (can be expensive/time-consuming to produce).

May overfit if not enough data or too complex a model.

Unsupervised models¶

Unsupervised learning works with unlabeled data. The model tries to discover hidden patterns or structures in the data.

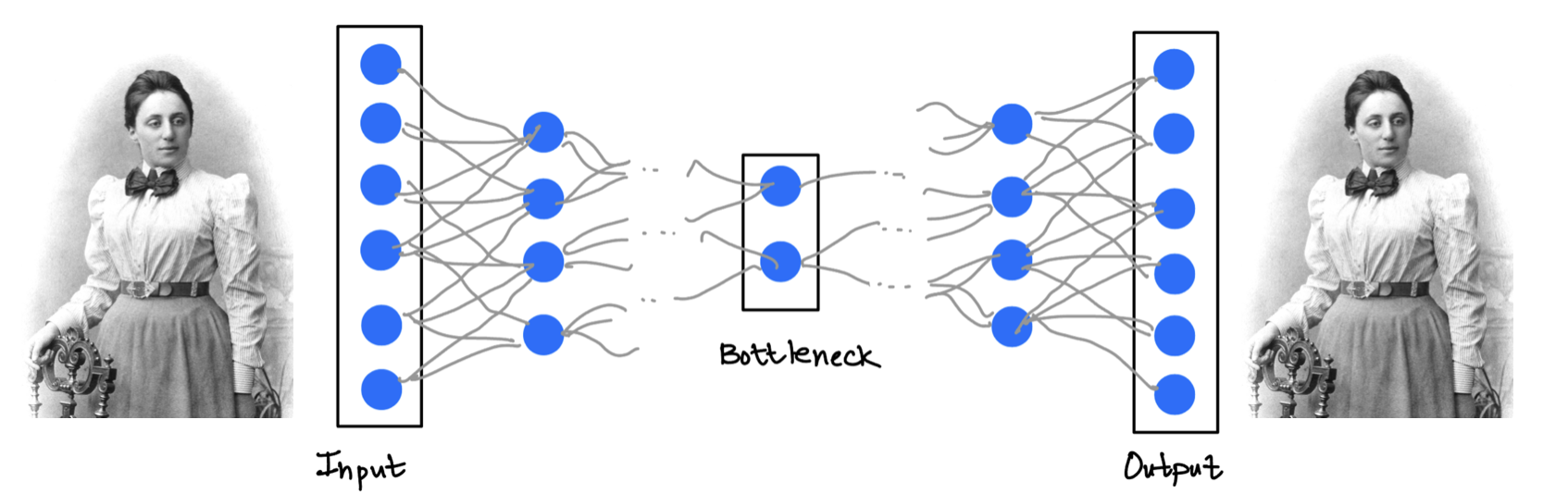

Examples: Clustering customers into groups based on purchasing behavior — the model finds structure without being told what group each customer belongs to. Another interesting example is of the autoencoder.

Pros:

Works without labeled data.

Helps with data exploration, dimensionality reduction, and pattern discovery.

Cons:

Hard to evaluate the model (no ground truth).

May find patterns that are not meaningful or useful.

Often requires strong assumptions about data structure.

Example: Autoencoder

Reinforcement Learning¶

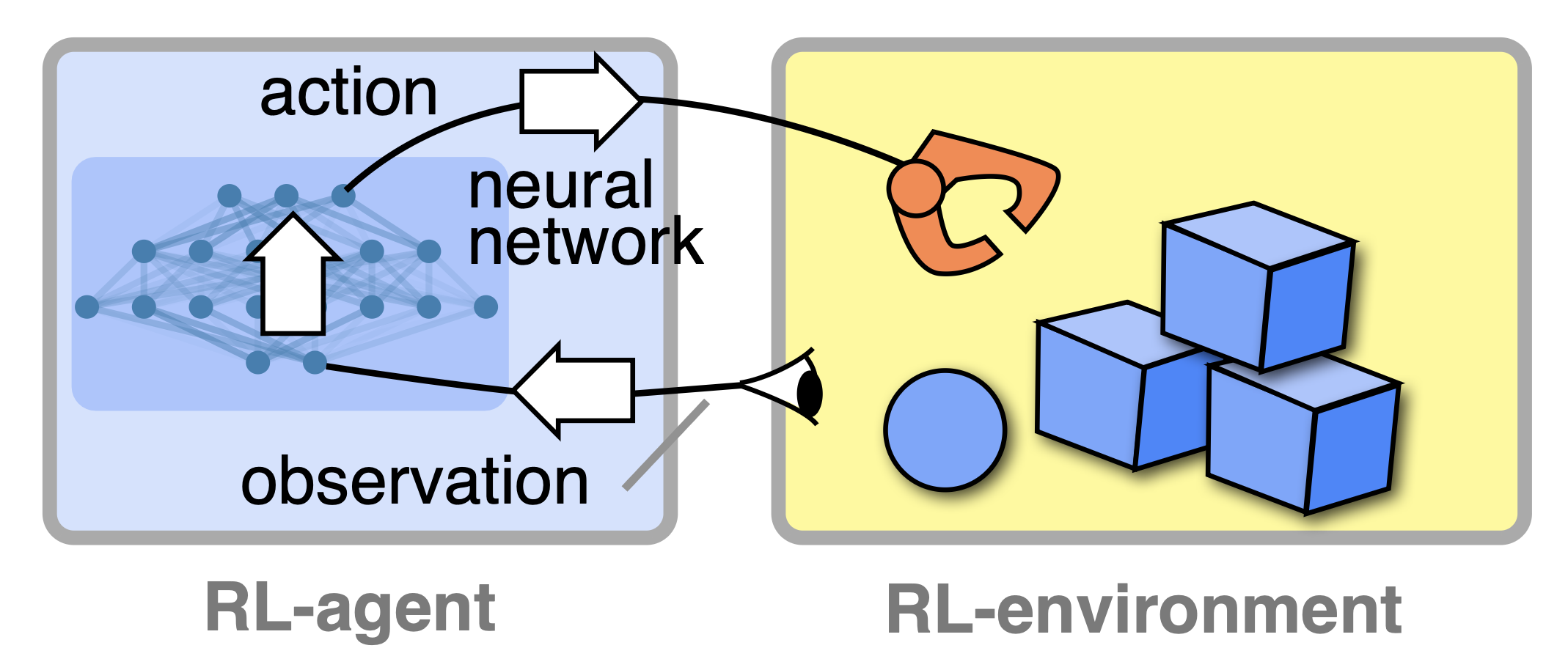

Reinforcement learning is based on an agent interacting with an environment. The agent learns through trial and error, receiving rewards or penalties for actions it takes.

Example: Training a robot to walk or a program to play chess — it learns strategies that maximize short or long-term reward.

Pros:

Excellent for sequential decision-making and control tasks.

Learns directly from interaction without needing labeled datasets.

Cons:

Often slow and computationally expensive.

May require a lot of exploration or simulation before learning effectively.

Sensitive to the choice of reward structure.

Figure 1:Image: Florian Marquardt lecture notes (https://