Fundamentals of Statistics

Before starting with the details of machine learning, let us first recap some fundamental concepts of statistics. This are the terms that you will use a lot if you stick with the business of machine learning. Machine learning is science as well as it is a form or painting, where statistics and mathematics are like the paint and stroke.

Probability distribution function¶

Let us first jump into the definitions of a probability distribution functions (PDF). They come in two flavours discrete and continuous. To be worthy of being a probability distribution both of them have obey some properties.

Discrete probability distribution¶

The probability distribution of a discrete random variable is a list of probabilities associated with each of its possible outcomes. It is also sometimes called the probability mass function. Suppose a random variable may take different values, with the probability that defined to be . Then the probabilities must satisfy the following:

1: 0 < < 1 for each

2: .

Binomial distribution¶

This is the distribution where only two outcomes are possible, success and failure with probabilities and . Then the probability of successes in trials is

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from scipy.stats import norm, binom

import ipywidgets as widgets

from IPython.display import display

def plot_binomial(n, p, k_highlight):

k = np.arange(0, n + 1) # Possible number of successes

probs = binom.pmf(k, n, p) # PMF values

plt.figure(figsize=(10, 6))

markerline, stemlines, baseline = plt.stem(k, probs)

plt.setp(markerline, color='b', label="PMF")

plt.setp(stemlines, color='b')

# Highlight one point in red

if 0 <= k_highlight <= n:

plt.plot(k_highlight, binom.pmf(k_highlight, n, p), 'ro', label=f"P(X={k_highlight})")

plt.title(f"Binomial Distribution PMF (n={n}, p={p})")

plt.xlabel("Number of Successes (k)")

plt.ylabel("Probability")

plt.legend()

plt.grid(True)

plt.show()

widgets.interact(

plot_binomial,

n=widgets.IntSlider(value=10, min=1, max=50, step=1, description='Trials (n)'),

p=widgets.FloatSlider(value=0.5, min=0.01, max=1.0, step=0.01, description='Success Prob (p)'),

k_highlight=widgets.IntSlider(value=5, min=0, max=50, step=1, description='k Highlight')

)<function __main__.plot_binomial(n, p, k_highlight)>❓ Exercise¶

Q1: For a binomial distribution with , and success probability of , what is the probability of getting 10 successes?

Click to show answer

Answer: The result is 0.02798. You can check this by using the function binom.pmf(10, 30, 0.5).

❓ Exercise¶

Q2: When is the binomial distribution most symmetric?

Click to show answer

Answer: A binomial distribution is most symmetric when p = 0.5.

Continuous probability distribution¶

As the name suggests in this case the outcomes can take any continuos value. In this case one can only talk about outcomes between some number to another. For example, in this case it is fare to ask the question what is probability of some random outcome , to be in the range . The curve, which represents a function , must satisfy the following:

1: The curve has no negative values ( for all values of ).

2: The total area under the curve is equal to 1.

Gaussian Distribution¶

The Gaussian or normal distribution, is something you will find everywhere in Data science. Sometimes this is one of the assumptions for many data science algorithms too.

A normal distribution has a bell-shaped density curve described by its mean and standard deviation . The density curve is symmetrical, centered about its mean, with its spread determined by its standard deviation showing that data near the mean are more frequent in occurrence than data far from the mean. The probability distribution function of a normal density curve with mean and standard deviation at a given point is given by:

For more mathematically oriented people, you can plug into this distribution and confirm

def plot_normal(mu, sigma):

x = np.linspace(mu - 8*sigma, mu + 8*sigma, 1000)

y = norm.pdf(x, mu, sigma)

plt.figure(figsize=(8, 4))

plt.plot(x, y)

plt.xlim(-20, 20)

#plt.ylim(0, 20)

plt.title("Gaussian Distribution")

plt.grid(True)

plt.show()

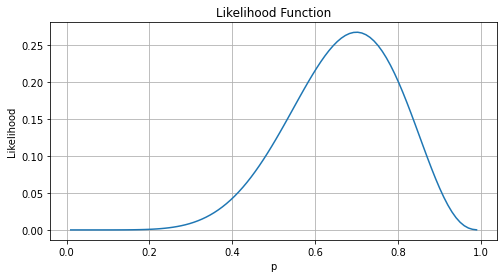

widgets.interact(plot_normal, mu=(-5, 5, 0.5), sigma=(0.1, 5.0, 0.1))<function __main__.plot_normal(mu, sigma)>Likelihood vs Probability¶

Probability: Given parameters, what’s the chance of observing the data?

Likelihood: Given data, how likely are the parameters?

Example:

Probability: “Given (p=0.7), what’s the probability of 3 heads in 5 tosses?”

Likelihood: “Given 3 heads in 5 tosses, what is the most likely value of (p)?”

# Likelihood visualization

obs_heads = 7

total_flips = 10

p_vals = np.linspace(0.01, 0.99, 100)

likelihoods = binom.pmf(obs_heads, total_flips, p_vals)

plt.figure(figsize=(8, 4))

plt.plot(p_vals, likelihoods)

plt.title("Likelihood Function")

plt.xlabel("p")

plt.ylabel("Likelihood")

plt.grid(True)

plt.show()

❓ Exercise¶

Q3: Given 8 heads out of 10 tosses, sketch or estimate the maximum likelihood estimate (MLE) for .

Click to show answer

Answer: The MLE for p is \frac{8}{10} = 0.8. This is just basically the probability of having 8 heads out of 10 tosses. From the plot above the mode (the value that appears most frequently, in this case the peak of the curve) of the plot is the probability.

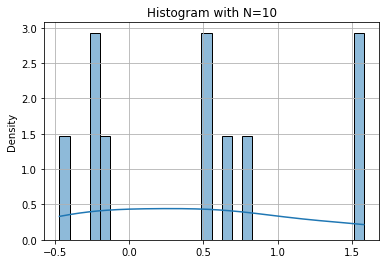

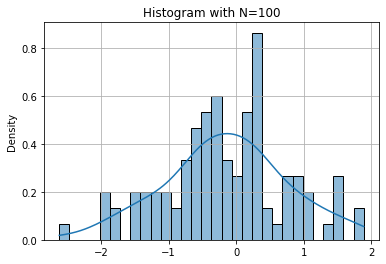

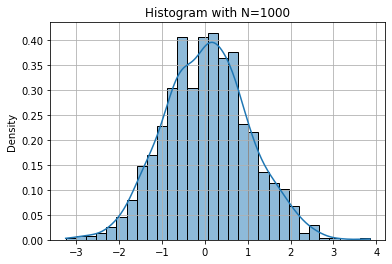

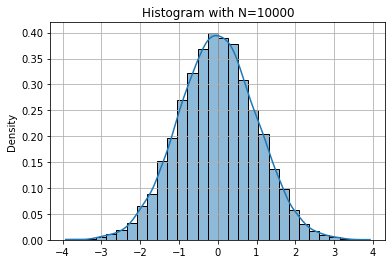

Histograms and Distribution Approximation¶

A histogram approximates the probability distribution of data. With more samples, it resembles the true distribution.

Key Concepts:

Histogram shape depends on sample size and bin width.

More data yields smoother distribution.

For HEP most of the time we will be looking at histograms or observed results from the detector. The target of ML in this case is basically to find a function to fit this emperical distribution.

np.random.seed(42)

for N in [10, 100, 1000, 10000]:

data = np.random.normal(0, 1, N)

sns.histplot(data, kde=True, stat="density", bins=30)

# A kernel density estimate (KDE) plot is a method for visualizing the distribution

# of observations in a dataset, analogous to a histogram. KDE represents the data

# using a continuous probability density curve in one or more dimensions.

plt.title(f"Histogram with N={N}")

plt.grid(True)

plt.show()

❓ Exercise¶

Q4: Why does the histogram with look so different from ?

Click to show answer

Answer: With N=10, there are too few samples to capture the underlying distribution, resulting in high variance and noise.

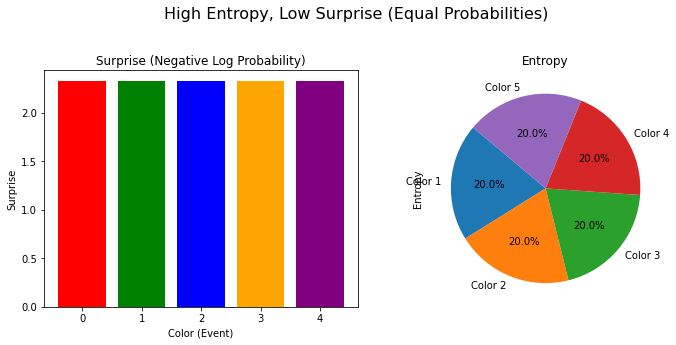

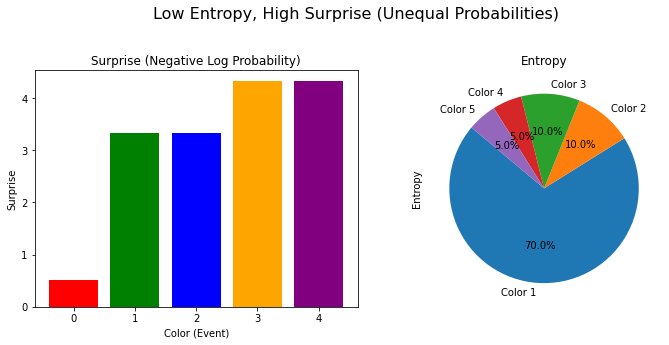

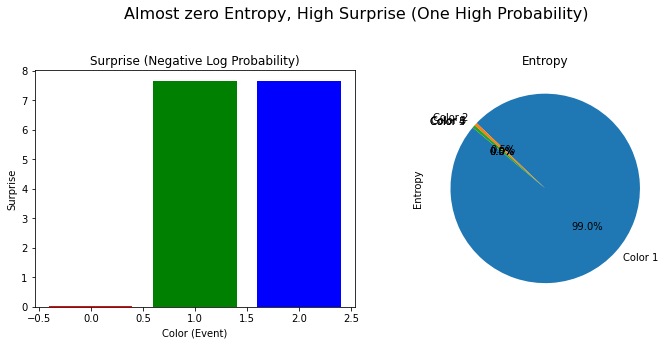

Surprise, entropy and gini index¶

Surprise (Self-Information)¶

Surprise is the measurement of how “unexpected” an event is. Therefore, the more probable the event is, the less surprising it should be. Mathematically for a event with probability it could have been , but to allow “zero” surprise for certain event, the surprise (or self-information) is defined as

Shanon entropy¶

Entropy is the average surprise across all possible outcomes.

For a random variable with outcomes and probabilities , Shanon entropy is defined as

Higher entropy --> more uncertainty; lower entropy --> less uncertainty

Gini index¶

Taking is computationally more taxing and therefore most of the times we use different other functions or formulas to quantify same thing as entropy.

To measure the impurity of a dataset, we use Gini index as

Where, is the number of unique classes in the dataset. is the proportion of data sample belonging to class in the Dataset .

❓ Exercise¶

Q5: If all samples belong to same class, then what is the Gini index?

Click to show answer

Answer: It should be 1-1=0

import math

def calculate_surprise(probability):

"""Calculates the surprise (negative log probability) for a given event."""

if probability == 0:

return float('inf') # Handle the case where probability is zero to avoid log(0) error

return -math.log2(probability)

def calculate_gini(probabilities):

"""Calculates the Gini index of a probability distribution."""

gini = 1

for probability in probabilities:

gini -= probability**2

return gini

def calculate_entropy(probabilities):

"""Calculates the entropy of a probability distribution."""

entropy = 0

for probability in probabilities:

if probability > 0: # Handle the case where probability is zero to avoid log(0) error

entropy -= probability * math.log2(probability)

return entropy

def visualize_surprise_and_entropy(probabilities, title="Surprise and Entropy"):

"""Visualizes surprise and entropy for different probability distributions."""

n_colors = len(probabilities)

colors = ['red', 'green', 'blue', 'orange', 'purple', 'brown', 'pink', 'cyan', 'magenta', 'black'] # Add more colors if needed

plt.figure(figsize=(10, 5))

plt.subplot(1, 2, 1)

# Plot Surprise

plt.title("Surprise (Negative Log Probability)")

plt.bar(range(n_colors), [calculate_surprise(p) for p in probabilities], color=colors[:n_colors])

plt.xlabel("Color (Event)")

plt.ylabel("Surprise")

# Plot Entropy

plt.subplot(1, 2, 2)

plt.title("Entropy")

plt.pie(probabilities, labels=[f"Color {i+1}" for i in range(n_colors)], autopct='%1.1f%%', startangle=140)

plt.ylabel("Entropy")

plt.suptitle(title, fontsize=16)

plt.tight_layout(rect=[0, 0.03, 1, 0.95])

plt.show()probabilities_1 = [0.2, 0.2, 0.2, 0.2, 0.2]

print("The entropy in this case: % .2f" % calculate_entropy(probabilities_1))

print("The Gini index: % .2f" % calculate_gini(probabilities_1))

visualize_surprise_and_entropy(probabilities_1, title="High Entropy, Low Surprise (Equal Probabilities)")

The entropy in this case: 2.32

The Gini index: 0.80

# Example 2: Unequal probabilities (lower entropy, higher surprise for rarer events)

probabilities_2 = [0.7, 0.1, 0.1, 0.05, 0.05]

print("The entropy in this case: % .2f" % calculate_entropy(probabilities_2))

print("The Gini index: % .2f" % calculate_gini(probabilities_2))

visualize_surprise_and_entropy(probabilities_2, title="Low Entropy, High Surprise (Unequal Probabilities)")The entropy in this case: 1.46

The Gini index: 0.48

# Example 3: One event with high probability, others with zero (zero entropy, infinite surprise for zero probability events)

probabilities_3 = [0.99, 0.005, 0.005, 0, 0]

print("The entropy in this case: % .2f" % calculate_entropy(probabilities_3))

print("The Gini index: % .2f" % calculate_gini(probabilities_3))

visualize_surprise_and_entropy(probabilities_3, title="Almost zero Entropy, High Surprise (One High Probability)")The entropy in this case: 0.09

The Gini index: 0.02

❓ Exercise¶

Q6: For different situations that you can think of, do a quantitative analysis of Gini index vs Shanon entropy.